Hi,

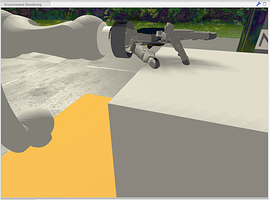

I recognized something weird about the transfer function. I want to figure out if I can use the efforts of the joints to detect a contact with an object. I started a little experiment with the arm_robot and let the index finger collide with a box. Like this

At first I have to say I modified the arm robot model and scaled down the Collision boxes around the fingers so I know this has influence of the controller but the controller seems to do something different only because I publish the messages with the transfer function.

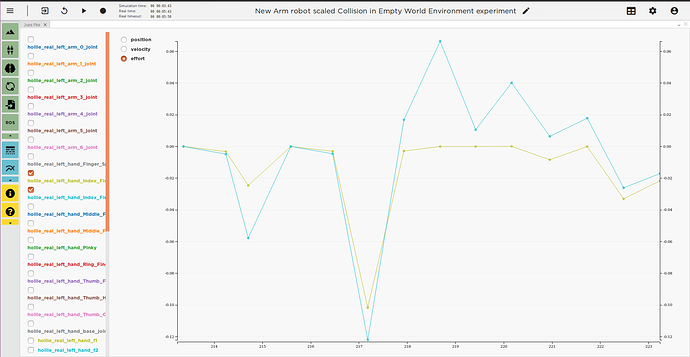

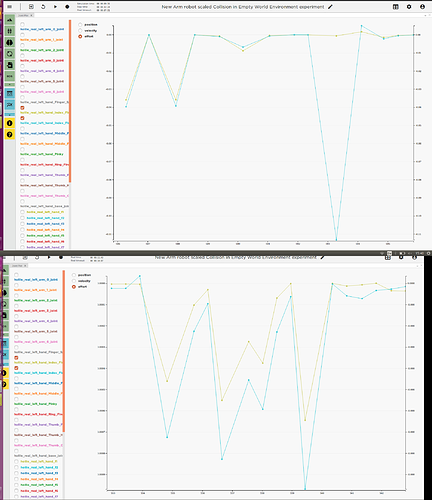

When I publish the ros message via the transfer function I get this joint plot (The finger begin to move at 216)

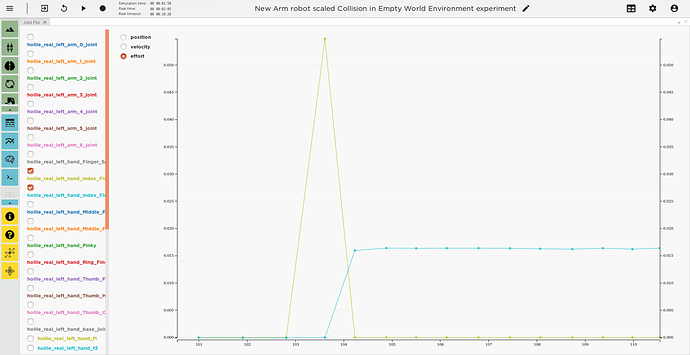

When I deactivate the transfer function and publish the same values via the ros terminal I get this Joint plot:

In addition when the robot arrives at a position via the transfer function the Arm is shaking a little bit, but when it gets to a position via ros terminal it’s not.

Is there a reason why the controller behaves in different ways?

Here is the code I used in the transfer function:

@nrp.MapRobotPublisher(“topic_elbow”, Topic(’/robot/hollie_real_left_arm_3_joint/cmd_pos’, std_msgs.msg.Float64))

@nrp.MapRobotPublisher(“topic_wrist”, Topic(’/robot/hollie_real_left_arm_6_joint/cmd_pos’, std_msgs.msg.Float64))

@nrp.MapRobotPublisher(“topic_index_prox”, Topic(’/robot/hollie_real_left_hand_Index_Finger_Proximal/cmd_pos’, std_msgs.msg.Float64))

@nrp.Neuron2Robot()def hand_effort_experiment (t, topic_elbow, topic_wrist, topic_index_prox):

#move arm to box topic_elbow.send_message(std_msgs.msg.Float64(1.55)) topic_wrist.send_message(std_msgs.msg.Float64(1.5)) #finger collision with box topic_index_prox.send_message(std_msgs.msg.Float64(1))

Best regards and many Thanks in advance

Katharina