Dear all,

since I want to use the polarization pattern of the sky in my NRP simulation,

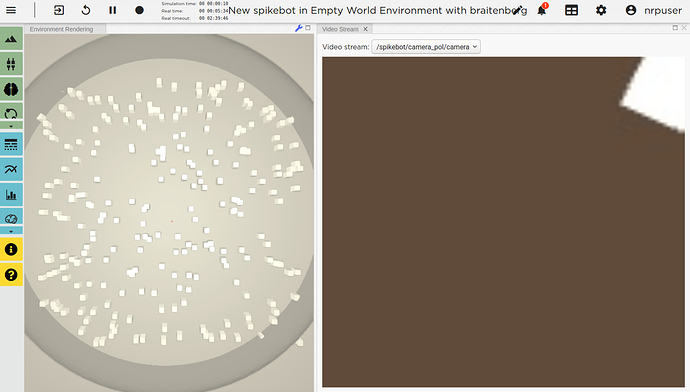

I started to implement a sky-dome into my gazebo world file. A standard camera model

taken from the gazebo example will detect the polarization pattern on the sky dome and convert it into

spike trains.

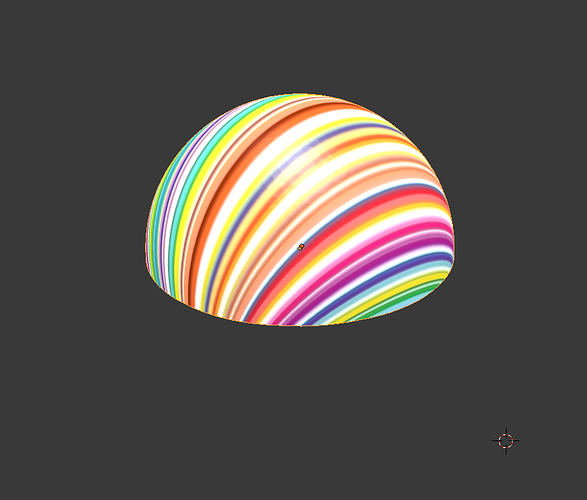

I designed the dome as a mesh in blender and exported the mesh as stl file (no dae conversion was possible).

At https://anyconv.com/stl-to-dae-converter/, I converted the stl file to dae.

I wrote an sdf file model skydome in the" NRP/Models" library and added it at “NRP/gzweb/http/client/assets”

and “.gazebo/models”. I added the mesh to the world file of the simulation (see environment.txt) and added an image texture. I also tried to directly use the material imported from blender through the dae file but in that case the NRP simulation crashes all the time.

The image consists of coloured stripes (see stripes.jpeg and the skydome screenshot). It should look like the skydome screenshot. However, the skydome model is included in the world but the colour of the sky recognized by the camera of the robot (see robot.txt) is always only one color (see screenshot) even though the viewing angle of the camera is 2 radians. The color is changing when the robot is moving. Nevertheless, the color of all image pixels is always identical accept for when a small white object appears in the image (see screenshot). Somehow the image to mesh mapping must be wrong or the lighting or something like that. How can I get a clear picture of the image?

Thanks a lot and best regards,

Thorben

model.sdf.txt (17.9 KB)

environment.txt (5.6 KB)